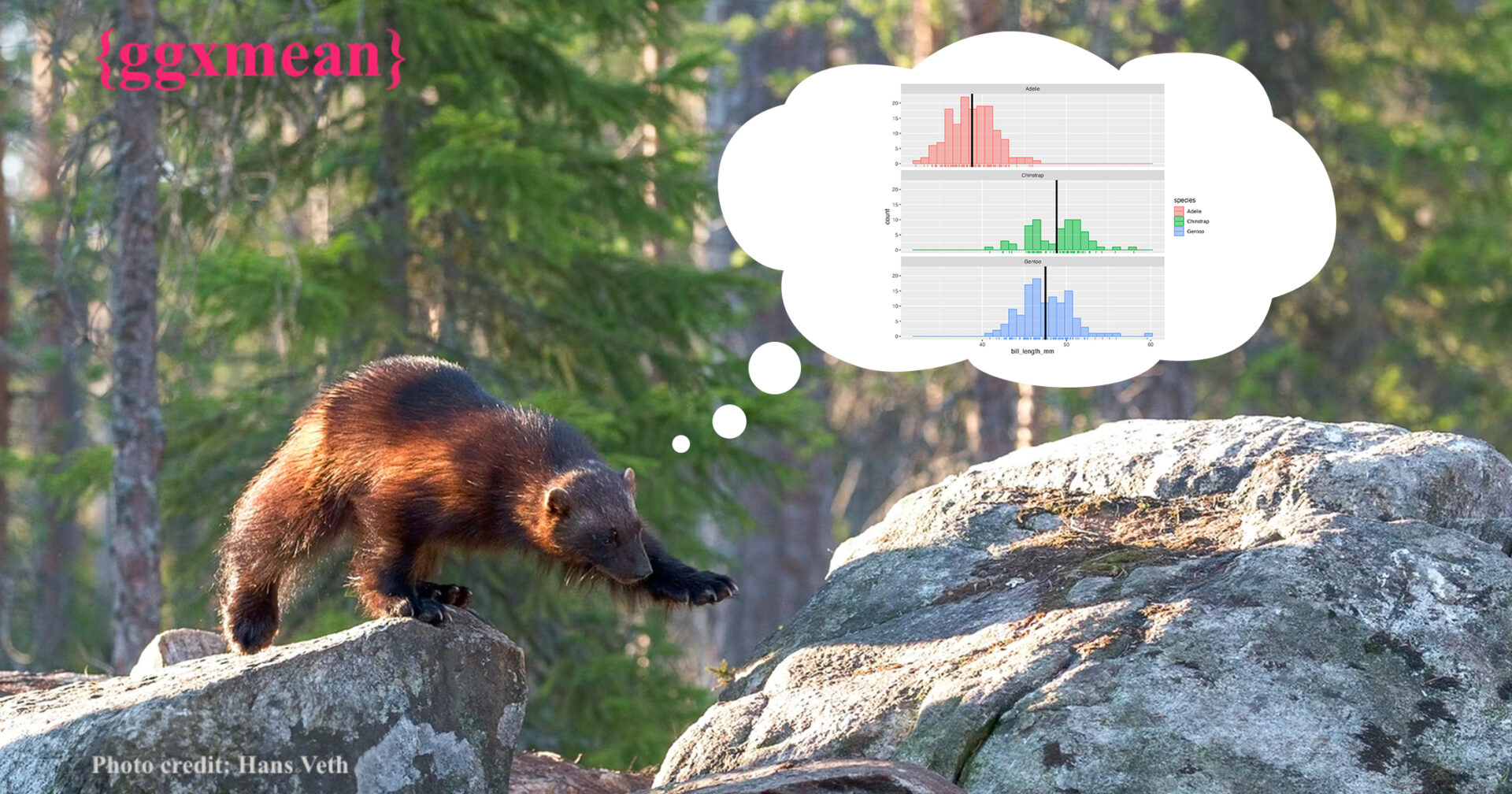

New statistical geoms in {ggxmean}

Gina Reynolds, Morgan Brown, and Madison McGovern unveil ggxmean, a package that helps users add statistical concepts to plots made with ggplot2.

2022-08-12